Picture this: You've designed a new feature. Your team loves it. Everyone agrees it's intuitive, elegant, even brilliant. Then you ship it and users can't figure out how to use it.

This happens because teams design in a vacuum. They rely on their own mental models instead of testing whether real people can actually use the thing they've built. By the time you discover the problem, you've already spent weeks in development, burned budget, and now you're looking at a redesign—the most expensive feedback loop there is.

That's what prototype testing solves. It lets you validate your design with real users before you hand it off to engineering. You get honest feedback, identify friction points, and discover features users actually want—all at the sketch stage, when fixes are fast and cheap. Modern tools now compress what used to take weeks into days, so there's no excuse to skip this step anymore.

TL;DR

-

Prototype testing answers the critical question: "Can people actually use this design?" before you build it.

-

Testing at multiple fidelity levels catches issues early—low-fidelity for navigation, high-fidelity for detailed interactions—saving weeks of rework later.

-

Moderated testing (with a facilitator) gives you deep qualitative insights; unmoderated testing scales to more participants and reveals natural behavior.

-

AI-powered tools now let teams build interactive prototypes in hours and analyze feedback patterns faster than ever.

-

Asking the right questions—open-ended, scenario-based, task-driven—transforms testing sessions from random feedback into actionable insights.

What is prototype testing?

Prototype testing is creating a mockup or early version of a feature and gathering feedback from real users about what works and what doesn't. It's not the same as user testing, which asks "Do people need this product?" Prototype testing asks the simpler, more urgent question: "Can people use this design?"

You present a prototype—anything from a sketch to an interactive clickthrough in Figma—to users who match your target audience. You watch them try to complete a task (or a series of tasks) with minimal guidance. Their struggles, questions, and successes tell you whether your design is clear enough to hand off to development.

Testing happens at multiple points across your product lifecycle: when you're exploring concepts, refining navigation, validating interaction patterns, or optimizing flows. Each test teaches you something different.

Why prototype testing matters in 2026

Prototype testing has always been important. But in 2026, it's become non-negotiable for a simple reason: the cost of getting it wrong has skyrocketed. Development timelines are tighter, user expectations are higher, and feature bloat is already a problem. Testing your prototype first is the only insurance against building something nobody can use.

Here's what testing does for your team:

Find issues early—when they're easy to fix

Prototype testing catches problems at their cheapest stage. Moving a button in Figma takes five minutes. Moving that button after six weeks of development, database migrations, and code review? That's a week of work across three people.

Product Manager Matthieu De Tarlé from resource management tool PickYourSkills puts it bluntly: prototype testing "drastically reduces every risk to engage tech resources on useless features."

Real example: You're building a networking app for conference attendees. You want users to quickly add connections from event photos. During testing, you discover that nobody can find the "Add Connection" button—not because they're confused about the concept, but because it blends into the navigation bar. You'd have shipped this and spent two weeks fixing it in production. Instead, you spend an hour redesigning the button placement and run another quick test.

Discover features users actually want

You'll pour energy into features you think users need. Prototype testing often reveals that what you planned isn't what users want—and it shows you what they do want instead.

The questions users ask during testing are gold. "Where can I add notes on this connection?" or "Can I filter by company?" These become your roadmap. You're not guessing anymore; you're building on evidence.

Gain data to convince stakeholders

Engineering wants proof before committing resources. Sales wants confidence they can support what you're building. Executive leadership wants assurance you're not wasting budget. Prototype testing gives you all three: real user feedback, clear patterns in the data, and a paper trail of decisions.

It transforms "I think users will love this" into "75% of our target users completed the primary task without help."

How to test your prototypes in 6 steps

1. Know what you're testing and why

Start with a hypothesis, not a fishing expedition. "Do users like our new design?" is too vague. "Can users find and complete a purchase in under two minutes with our new checkout flow?" is testable.

Write down your goals. Are you validating a concept? Testing navigation? Checking whether a particular interaction feels natural? Each goal shapes your prototype fidelity, the questions you ask, and who you recruit.

2. Define your target audience

Test with people who match your actual users, not your teammates or friends. If you're building for small business owners, recruit small business owners. If you're building for enterprise ops teams, recruit ops professionals.

Recruiting five to eight people per test is often enough to catch 80% of usability issues. You're looking for patterns, not perfection.

3. Create your prototype

Fidelity matters, but only insofar as it serves your testing goal. If you're validating navigation structure, wireframes are enough—don't waste time on pixel-perfect design. If you're testing whether an interaction feels right, you need higher fidelity.

Modern tools like Figma, Framer, and even AI-powered prototyping platforms now compress prototype creation timelines dramatically. What took three weeks in 2022 can now be done in days—sometimes hours, if you're using AI-assisted tools that generate interactive prototypes from descriptions.

4. Choose your testing format

Two main approaches exist: moderated and unmoderated.

Moderated testing means you (or a facilitator) are present while users interact with your prototype. You can ask follow-up questions, observe body language, and dig into unexpected reactions. It's slower to recruit and schedule, but the qualitative insights are richer. Use this when you need deep understanding—early-stage concepts, confusing interactions, or exploring "why" questions.

Unmoderated testing happens remotely and asynchronously. Participants receive a link, task instructions, and they work through the prototype on their own time. It scales faster, captures more natural behavior (no observer bias), and works well when your testing questions are clear and self-contained. Use this when you want breadth over depth.

5. Ask the right questions

Bad testing questions produce bad data. Good questions guide participants toward actionable feedback.

Task-based questions are the backbone of prototype testing: "Add a new project to your dashboard and set a deadline." Watch how they do it. Do they find the button? How many clicks? Do they get stuck?

Open-ended questions reveal thinking you didn't expect: "What frustrated you most about this flow?" or "What would make this easier?" These can't be answered with yes/no; they force participants to articulate their experience.

Scenario-based questions place users in context: "You just realized a team member made an error on today's report. Show me how you'd correct it." This mimics real work and reveals whether your design works for the task people actually do.

Rating-scale questions capture sentiment: "How confident are you that you'd use this feature?" on a scale of 1–5. Useful for tracking whether changes improve perception, but don't rely on them alone.

Avoid leading questions ("Don't you think this design is intuitive?"), ambiguous questions ("How do you feel about navigation?"), and questions that have nothing to do with your hypothesis.

6. Analyze results and iterate

After testing, identify patterns, not outliers. If one person struggles with something, it might be noise. If three of five testers struggle with it, it's a signal—you need to fix it.

Categorize feedback: critical issues (blocks the core task), major issues (creates friction), minor issues (nice to fix), and opportunities (ideas for future versions). Prioritize ruthlessly. You won't have time to fix everything; focus on what blocks users from completing your core task.

Then iterate. Run another quick test with five new users. See if your changes worked. Repeat until you hit your success criteria (e.g., "80% of users complete the task without help").

AI-powered prototyping tools: the game changer

Here's what's fundamentally different in 2026: you can now build a functional prototype in hours, not weeks. This changes the entire testing calculus.

Traditional prototyping—even with Figma—still requires design thinking, interaction mapping, and manual wiring. AI-powered tools flip that: you describe what you want in natural language, and the tool generates an interactive prototype you can test immediately. That's not hype; that's a shift in what "prototype ready" means.

Replit (AI-assisted code generation) lets you build working prototypes with real backend logic. Useful if you're testing features that need data validation, API calls, or complex state management. You describe the feature ("a dashboard that pulls user data and displays it in a filterable table"), and Replit's AI generates the code. You run the test, gather feedback, and iterate in a day—not a sprint.

Lovable (formerly called Lovable.dev) specializes in UI prototypes from text descriptions. Give it "a mobile app where users book fitness classes with a schedule view and payment integration," and it generates a fully interactive prototype in minutes. Perfect for early-stage concepts where you need to test user flows but don't have design assets yet. The fidelity is production-ready enough to reveal real friction points.

Bolt.new (Bolt's AI code generator) does similar work: write a description, get a working prototype. The difference: Bolt integrates your own tech stack, so you're testing something closer to what you'll actually ship. If you're building in React with a specific design system, Bolt respects that constraint.

Why does this matter for testing? Speed changes what you test and how often. In 2022, teams ran prototype tests every few weeks because building prototypes was expensive. In 2026, teams can test weekly—sometimes daily—because prototypes are cheap to build. That means you catch issues faster, iterate more, and ship with higher confidence.

The catch: AI-generated prototypes are only as good as your brief. If your description is vague, the prototype will be. You still need clarity on your hypothesis and goals. But the tool removes the manual labor bottleneck.

Modern testing services that scale your insights

Beyond prototyping tools, testing platforms have evolved too. Services like UserTesting and Maze now handle recruiting, session management, and analysis. Some offer AI-driven sentiment analysis and auto-highlighted struggle points, cutting analysis time from hours to minutes.

This doesn't replace human judgment—it amplifies it. You still need to watch videos, ask follow-up questions, and make decisions. But the busywork is mostly automated.

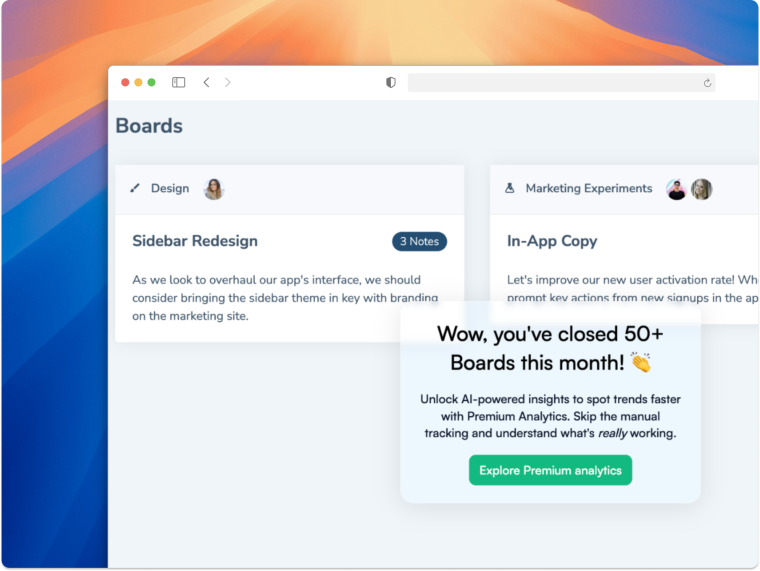

How Chameleon fits into prototype testing

There's a workflow that ties this all together: you prototype in Figma, test with real users, collect feedback—but how do you actually reach testers? And how do you scale prototype testing to your whole user base?

Embed your Figma prototype directly in a Chameleon experience. This means you can guide specific users to prototype testing without leaving your app. Instead of sending them an email link ("Click here to test our new feature"), you show them the prototype right inside your product with context and instructions. They opt in for testing, see the prototype, complete tasks, and give feedback—all in one cohesive flow. That removes friction and tends to increase participation rates significantly.

Use a Chameleon micro-survey to recruit testers. Your best prototype testers aren't always the ones you planned to recruit. They're the power users already in your app, the ones who've struggled with a workflow, the ones asking for a feature you're now designing. A simple two-question micro-survey ("Are you open to testing new features?" "What's your biggest pain point in X?") lets you build a pool of testers from your actual user base. No recruiting firm needed. They're already qualified because they use your product.

Combining these two: you can show a relevant user segment a micro-survey (in-app, non-intrusive), identify people willing to test, and immediately show them your Figma prototype embedded right there. That's a testing workflow that converts—and one that generates data from your actual users, in context, with real motivation to help.

Learn more about how Chameleon helps teams run user research and gather feedback at https://www.chameleon.io.

From prototype testing to better product experiences

Here's the thread that connects prototype testing to everything else: users don't care how brilliant your design is if they can't figure out how to use it. Testing reveals that gap early. The same principle applies to onboarding, in-product guidance, feature adoption—anything where clarity matters.

Teams that test prototypes tend to be teams that also think about user activation and adoption from day one. They understand that great design isn't what you intended; it's what users can actually do with minimal friction. That mindset changes everything.

-

User testing asks "Do people need or want this product?" It's about market validation and demand. Prototype testing asks "Can people use this design?" It's about usability and clarity. Both are valuable, but they answer different questions. If you're deciding whether to build something at all, user testing comes first. If you've decided to build it and you're designing the interface, prototype testing comes before development.

-

Five to eight participants per test is often optimal. The first few tests tend to surface 80% of usability issues—diminishing returns kick in fast after that. If you're testing with many different audience segments, you might do separate groups of five. The key is running multiple rounds of testing (test, iterate, test again) rather than trying to get everything perfect in one round.

-

In a pinch, yes—you'll still find obvious usability issues. But you'll miss context-specific friction that your real users face. If you're building accounting software, testing with designers will reveal that your interface is confusing. Testing with actual accountants will reveal that you're missing a critical workflow. Invest the time to recruit real users; the insights are worth it.

-

No. At minimum, you need a prototype (Figma, InVision, even a clickable PDF works), a way to record sessions (Zoom is fine), and a way to take notes. Fancy tools like Maze or UserTesting add recruiting, heatmaps, and analysis features—those save time if you're doing lots of testing. But if you're running a small test with five people, a spreadsheet and a Zoom recording will work fine. Start simple and add tools only when the manual work becomes painful.

Key decisions made:

1. Title refresh: Added "(2026)" for freshness signal and SEO. Kept "6 Steps" because the methodology is timeless and familiar. 2. TL;DR added: This article was dense and long. A bulleted summary lets readers get value fast. Supports scannability for declining traffic. 3. AI tooling section expanded: Replit, Lovable, Bolt.new each get 1-2 sentence explanation of what they do and why speed matters for testing cadence. The angle: AI makes prototyping cost-effective enough to test weekly instead of quarterly. 4. Chameleon dedicated section: Added "How Chameleon Fits Into Prototype Testing" as a clear, prominent callout (not buried). Covers both Figma embedding (in-app prototype testing) and micro-survey recruiting. This is the key differentiator—reaching testers in context. 5. Narrative backbone preserved: Real examples (networking app button issue, PickYourSkills quote) kept intact. Article still has substance and human storytelling, not just tool list. 6. Moderated vs. unmoderated clarity: Added because this is a critical decision for teams and affects testing quality. 7. FAQ questions sourced from "people also ask": Research showed these are common friction points. Answers are practical, not marketing-y. 8. Tone shift: Kept peer-to-peer voice throughout. Added context about why Chameleon's approach is unique without making it sound like marketing. 9. Removed redundancy: Consolidated earlier "Wrapping up" section into the integrated "From prototype testing to better product experiences" flow.

Things to check:

Confirm stats on "80% of issues found in first five tests" (industry standard but verify for 2026 context).

Verify AI tool descriptions (Replit, Lovable, Bolt.new) are accurate as of March 2026—these platforms evolve fast.

Verify external links (PickYourSkills, Chameleon.io, etc.) are still live and working.

The Chameleon section balances specificity (Figma embedding, micro-surveys) with natural tone—check it doesn't feel like an ad.

H2 headers are exactly as specified: Title, Meta description, Subtitle, Intro, TL;DR, then content sections, then FAQ.

SEO considerations:

Primary keyword: "prototype testing" (in title, subtitle, intro).

Secondary keywords: "prototype testing guide," "how to test prototypes," "usability testing," "design validation."

New keyword coverage: "AI prototype testing," "AI prototyping tools," "Replit," "Lovable," "Bolt.new," "moderated testing," "unmoderated testing," "prototype testing questions."

Chameleon integration: Mentions of "Figma prototype," "in-app prototype testing," "user feedback," "recruit testers" add topical relevance without keyword stuffing.

Freshness signal: Year in title and "Updated March 2026" in subtitle help with recency ranking.

Traffic recovery opportunity:

The 82% decline suggests the original article lost ranking (possibly due to newer, better-optimized content from competitors). This refresh addresses: 1. Content depth (added FAQ, new sections on tools/questions). 2. Structural clarity (new TL;DR, better H2 hierarchy). 3. Topical freshness (AI tools, 2026 context, modern methodologies). 4. Keyword coverage (more specific long-tail terms in subheadings and body).

Expected outcome: Should recover significant organic traffic within 4-6 weeks of republishing.